Google Duo is testing a new codec to get you better call quality even over poor connections

Lyra, the new codec, is being trained with thousands of hours of audio with speakers in over 70 languages using open-source audio libraries.

The world might be preparing for 5G, but in reality a vast majority of people are still making do with slow data speeds and poor connectivity. And to help deal with that, Google Duo is using compression techniques to help deliver the best audio and video experience possible over bad/spotty connections.

Google is testing a new audio codec that works on substantially improving audio quality over poor network connections. The Google AI team introduced ‘Lyra', a low-bitrate speech codec, over a detailed blog post. Lyra's basic architecture involves “extracting distinctive speech attributes (features) in the form of log mel spectograms”. These are then compressed and transmitted over the network and then recreated on the other end using a generative model.

So far, this is also what traditional parametric codecs do. However, Lyra uses a new high-quality audio generative model that can extract critical parameters from speech and can also reconstruct speech using minimal amounts of data.

The new generative model that's being used in Lyra is built upon Google's older work on WaveNetEQ, a “generative model-based packet-loss-concealment system” that's used on Google Duo currently.

Also Read: Google Duo may stop working on uncertified Android phones

Google explained that this approach has made Lyra “on par with the state-of-the-art waveform codecs” that are used in many streaming and communication platforms. The benefit Lyra has over these other codecs, as Google claims, is that Lyra does not send over the signal sample-by-sample which requires a higher bitrate and therefore, more data.

Lyra uses a “cheaper recurrent generative model” that works at a lower rate but generates multiple signals at different frequencies in parallel that are combined later into “a single output signal at the desired sample rate”.

Running a generative model like this on a mid-range device “yields a processing latency of 90ms” and Google says that this is in line with other traditional speech codecs.

This, paired with the AV1 codec for video can make video chats possible for users who are using an “ancient 56kbps dial-in modem” as well. Google explained that Lyra is designed to operate in “heavily bandwidth-constrained environments, like 3kbps.

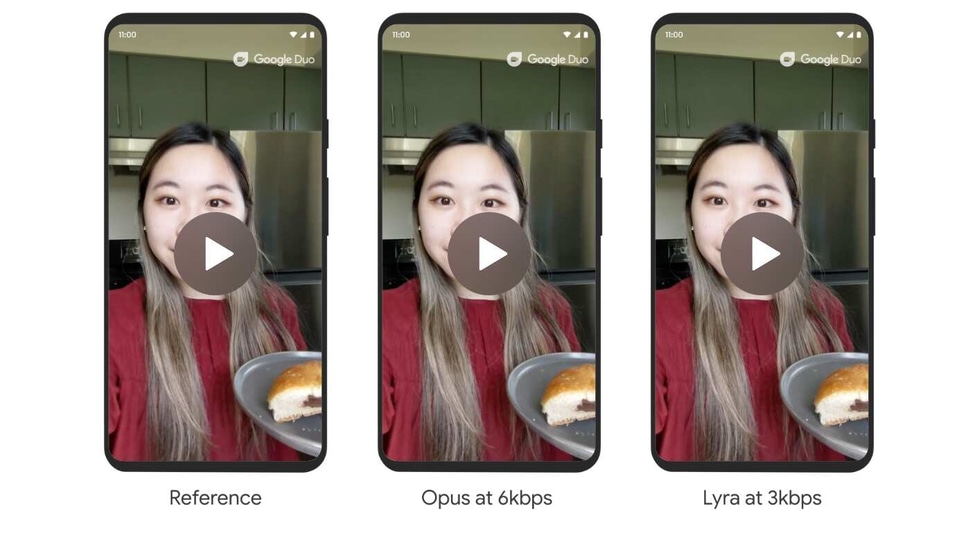

Google also added that Lyra can outperform codecs like Speex, MELP and AMR at very low-bitrates and also royalty-free, open-source codecs like Opus. Google shares some speech samples on the blog, you can check it here.

Lyra is being trained “with thousands of hours of audio with speakers in over 70 languages using open-source audio libraries and then verifying the audio quality with expert and crowdsourced listeners”, Google said. And the new codec is already rolling out on Google Duo. Lyra is currently being used for speech use cases, but Google is also exploring how to use it as a general-purpose audio codec.

Catch all the Latest Tech News, Mobile News, Laptop News, Gaming news, Wearables News , How To News, also keep up with us on Whatsapp channel,Twitter, Facebook, Google News, and Instagram. For our latest videos, subscribe to our YouTube channel.