Google Gemini Ultra AI unveiled; your Google Bard experience set to improve; know how

Google has launched Gemini AI, its next-generation foundation model. The company claims its top-of-the-line version, called, Gemini Ultra, can even outperform human experts as well as ChatGPT! Know all about the Google Gemini Ultra AI and how it improves Google Bard.

View all Images

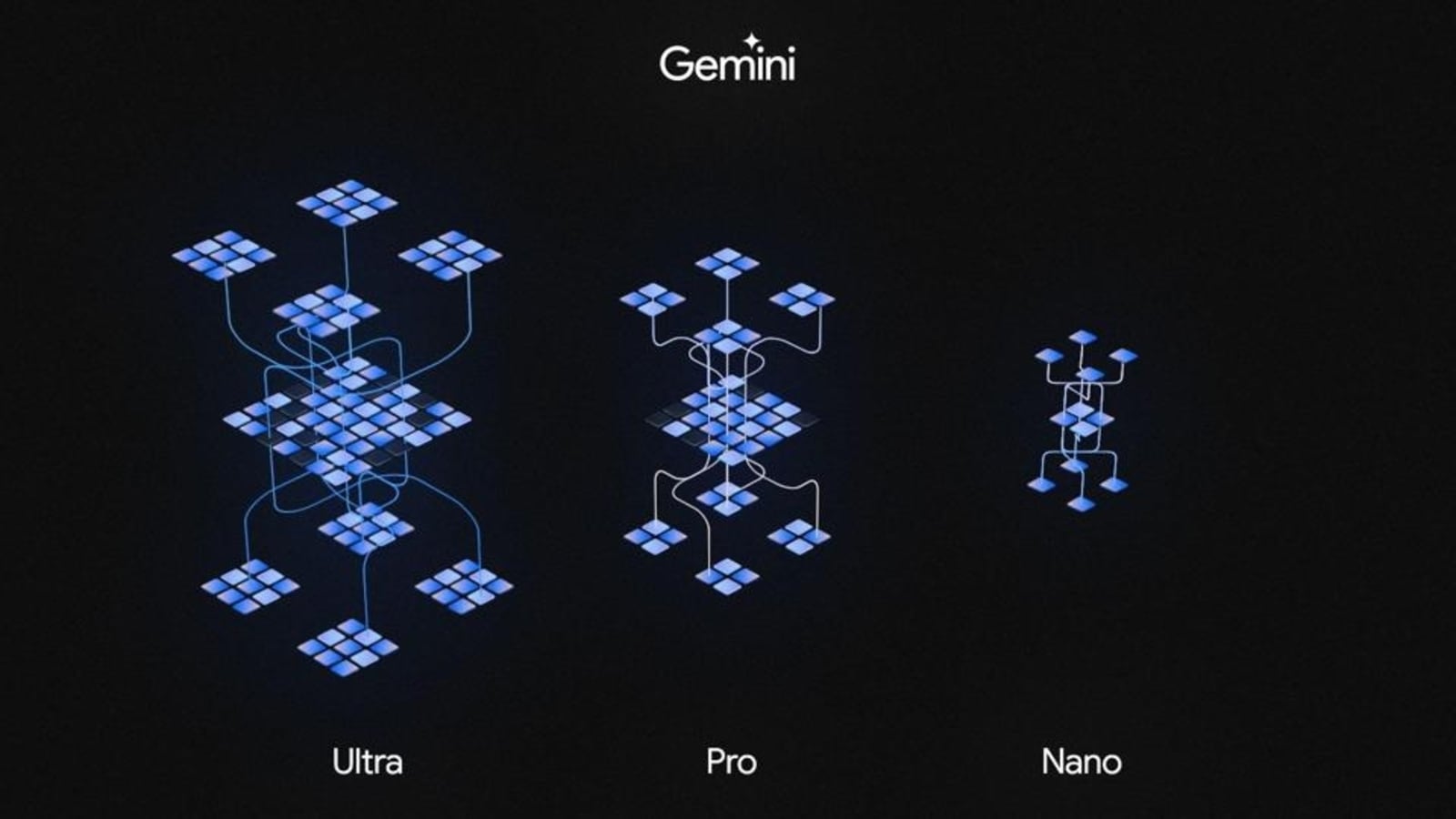

View all ImagesThe artificial intelligence (AI) race is heating up, with the world's biggest tech companies making efforts to rapidly advance this technology. With OpenAI's GPT being extremely popular, Google has now launched its next-generation foundation model called Gemini. It has been launched in three sizes - Nano, Pro, and Ultra. While the Gemini Nano model can run natively on Android devices such as Google Pixel phone, the Gemini Pro has been integrated into Google Bard, the company's own AI chatbot. On the other hand, the Gemini Ultra is the top-of-the-line version. Google claims it is even more powerful than ChatGPT and is the most powerful Large Language Model (LLM) ever created. Know all about the Google Gemini Ultra AI.

Google Gemini Ultra: Details

According to Google, Gemini Ultra is its most capable and advanced AI model which is meant for highly complex tasks. It has been developed by Google DeepMind in collaboration with other teams including Google Research. It was built from the ground up to be multimodal, which means it can generalize and seamlessly understand, operate across, and combine different types of information including text, code, audio, image, and video. Google says Gemini was trained on AI-optimized infrastructure using its in-house designed Tensor Processing Units (TPUs) v4 and v5e. It is efficiently serveable at scale on TPU accelerators due to the Gemini architecture.

How will Gemini Ultra improve your Google Bard experience?

Google has already integrated Gemini Pro into Bard and it improves various aspects of the AI chatbot including understanding, summarizing, reasoning, coding, and planning. You can already try out Gemini-powered Bard with text-based prompts in more than 170 countries. The company says that support for other modalities will arrive soon.

Google has also announced that it will launch a new version of Google Bard called “Bard Advanced”. It will be powered by the Gemini Ultra AI model and it can act upon information in various forms, including text, images, audio, video and code.

Google says “One of the first ways you'll be able to try Gemini Ultra is through Bard Advanced, a new, cutting-edge AI experience in Bard that gives you access to our best models and capabilities. We're currently completing extensive safety checks and will launch a trusted tester program soon before opening Bard Advanced up to more people early next year.”

.Gemini vs GPT-4: Which is better?

Google has tested its benchmarks against those of OpenAI's GPT-4, and the company claims that Gemini Ultra has defeated OpenAI's LLM in 30 out of 32 benchmarks. The benchmarks include massive multitask language understanding, reasoning, reading comprehension, commonsense reasoning, and Python code generation.

In a blog post, Google said, “We've been rigorously testing our Gemini models and evaluating their performance on a wide variety of tasks. From natural image, audio, and video understanding to mathematical reasoning, Gemini Ultra's performance exceeds current state-of-the-art results on 30 of the 32 widely-used academic benchmarks used in large language model (LLM) research and development”.

The first and the most significant one was MMLU (massive multitask language understanding), which uses a combination of 57 subjects such as math, physics, history, law, medicine, and ethics to test both world knowledge and problem-solving abilities. As per the company, Gemini Ultra became the first model to outperform human experts with a score of 90.0 percent. GPT-4, in comparison, scored 86.4 percent.

Gemini Ultra was also ahead in Big-Bench Hard (multistep reasoning) and DROP (reading comprehension) benchmarks under the Reasoning umbrella where it scored 83.6 percent and 82.4 percent respectively, compared to GPT-4's 83.1 and 80.9 percent scores.

Catch all the Latest Tech News, Mobile News, Laptop News, Gaming news, Wearables News , How To News, also keep up with us on Whatsapp channel,Twitter, Facebook, Google News, and Instagram. For our latest videos, subscribe to our YouTube channel.