Clean-up crews: Meet the guys who scrub the web of nudity, violence, porn

A small but growing group of Indian workers are spending their days scouring the internet for gore, fighting off scammers and spammers.

Imagine waking up to a video of a beheading playing on your social media feed. Or swiping through a dating app to find a prospective beau baring it all in a desperate attempt to land a date.

Across the country, there are teams of young engineers whose job it is to make sure that doesn't happen.

They're called web content moderators, and they're the guys who scrub websites of illicit, explicit and undesirable content, using a set of rules or guidelines to determine what user-generated content ought to be purged.

The job involves sifting through about 2,000 images per hour, or up to 5,000 dating profiles a day. Porn, violence, gore, nudity, scammers, sexual solicitation are the most common violations.

"About 20% of what they view every day is offensive. The kind of clients we deal with in India do not have extremely graphic content like brutal murders or rapes or child pornography showing up on their portals," says Suman Howlader, founder of Foiwe Info Global Solutions, which started providing moderation services in 2010.

In most cases, moderation follows a two-step protocol. AI or Artificial Intelligence technology is used for the first round of identifying non-permissible content. Humans then go through that content and identify the posts that fall into the 'grey area'.

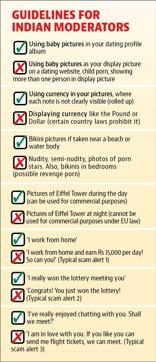

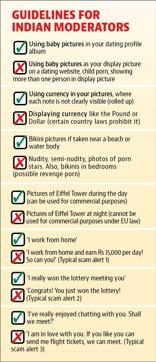

Whenever a user of a dating site creates a profile, Foiwe moderators are required to approve or flag all the elements in it, from pictures to personal information. "Male users cannot have female display pictures; group pictures, baby pictures and pictures of porn stars as DPs are a no-no," says Howlader.

The guidelines change depending upon clients. While nude pictures are always deleted, some permit bikini pictures — as long as the setting is a beach and not a bedroom… because the latter could be a case of revenge porn.

For commercial websites, the guidelines are quite different.

"Some country laws prohibit showing close-ups of their currency notes. There's even an EU law banning the commercial use of pictures of the Eiffel Tower taken at night," explains Howlader.

Watch a video of the content moderators at work

The secret 'web police'

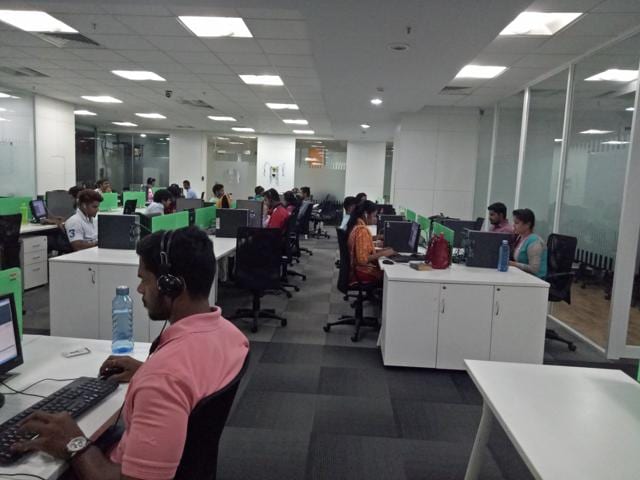

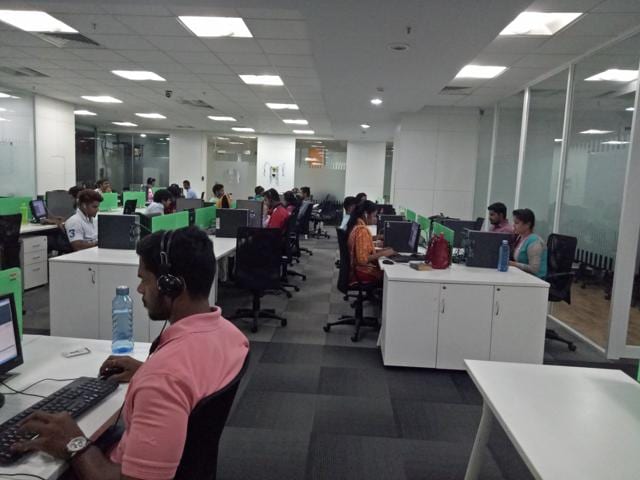

In a small office in Bangalore, about 100 employees of Foiwe sit in rows, leaning into their screens with rapt attention. They spend eight hours a day weeding out thousands of unwanted photograph, video and text uploads on social media channels, dating and e-commerce websites based in India and in countries such as Spain, the US, UK, Canada and Russia.

The coolest team to be on is the fraud detection or 'Fake Team'.

"We like to think of ourselves as detectives," says team leader Rajeev Srivastav, 29, with a grin. "We're constantly on the lookout for profiles that are not genuine, trying to understand user behaviour to determine which accounts are real and which are fakes."

Armed with a database of blacklisted IP address, it's up to these guys to bait and block the scammers and spammers.

Scammers tend to fall into three categories: Those that flood users' inboxes with fake products and job offers. 'Work from home and earn ₹15,000 per day!' That kind of thing. Or pretend someone has won a lottery. Or disguise themselves as lovers and end up asking for money or flight tickets "so we can finally meet".

"Suspicious activity includes users who declare their 'undying love' to 100 people at the same time, telling sob stories and asking for monetary help. Also, widows saying they want to share their property with someone trustworthy. To be honest, my work doesn't seem very different from that of the cybercrime departments of the state police," says Srivastav, laughing.

"A video of an ISIS beheading kept popping up one of the sites I was moderating. It was gruesome, but that's my job. Somebody has to filter out the content so others don't see it," says senior content moderator Chandan Nayak.

"Protecting lovesick users from being duped by heartless scammers makes me love my job a little more."

The fact that India's web content moderators get more scam alerts and semi-nudity than, say, beheadings or child pornography also makes their job easier.

In the US, for instance, moderators for Microsoft were reportedly exposed to so many grisly videos that they sued the company claiming they had developed symptoms of post-traumatic stress disorder.

In India, job interviews typically include questions about the applicant's social media experiences, to ensure that the exposure they face in the course of their moderation won't be a complete shock.

"We look out for good observation skills in applicants. Being intuitive is a major plus point. Then they have a two- to three-week training session where we brief them about what the job entails," says Suresh Reddy, founder and managing director of InfoEsearch, a Hyderabad-based digital services company that began content moderation five years ago. "We have counselling sessions twice a month and if someone is uncomfortable with a particular type of moderation, we transfer them to a different department."

Small players in a big market

The content moderation business in India is growing in metropolises like Bengaluru, Delhi, Hyderabad and Kolkata.

"This part of the industry is something that was non-existent ten years ago and is all set to pick up, because of the huge and growing demand," says Aravind Rao, chief operations officer at InfoEsearch.

"Now a lot of Indian companies are open to the idea of getting their content moderated. Several Fortune 500 IT companies have recently set up social content moderation divisions in cities like Bangalore over the last few years," adds Howlader.

He adds that almost 80% of his company's revenue comes from commercial content moderation. The seven-year-old company also offers software testing and IT consultation services.

The fact that there is so much scope in this sector prompted Howlader to set up another branch in Kolkata, with a team of 15 moderators and are hoping to start operations in the coming month.

"Algorithms are useful in keeping out unwanted stuff, but people are creative enough to beat the system," explains Pranav Savlani, business development head of Squadrun, which provides app-based commercial content moderation in San Francisco and Delhi. "If nudity is rejected by the algorithm, the next challenge could be rejecting images with 'narcotics' or 'inappropriate pictures of famous celebrities' being used on e-commerce portals."

Squadrun has a workforce of 75,000 freelancers, who act as the last shield of moderators for e-commerce websites.

Siddharth Pillai, co-director of Aarambh India, the country's first Internet hotline to report online child sexual abuse imagery in partnership with the UK-based watchdog Internet Watch Foundation (IWF), cautions that moderators must take frequent breaks where they leave their cubicles and indulge in light recreation.

"Additionally, they must undergo periodic counselling to ensure that constant exposure to abusive content doesn't cause trauma," he says.

However, all's not dull and dreary in the world of moderation. "Recently, while moderating a website I came across that hilarious video of US President Donald Trump appearing to push the Montenegro prime minister at the NATO summit in Paris. I showed it to my colleagues and there were ripples of laughter across the room. We're often among the first to see such viral content," says Santosh Kumar, 29, a content moderation team leader at InfoEsearch. "We get to have our share of fun."

For Shailaja Chowdhary, 27, a content moderator at InfoEsearch for four years, her job gives her a sense of purpose.

"I've come across videos of children being physically and sexually abused and my heart goes out to them. Instead of becoming depressed, I feel it's my duty to spread awareness and talk to people about it and spread the word about such violence still being rampant across the globe," she says.

Catch all the Latest Tech News, Mobile News, Laptop News, Gaming news, Wearables News , How To News, also keep up with us on Whatsapp channel,Twitter, Facebook, Google News, and Instagram. For our latest videos, subscribe to our YouTube channel.