Facebook is finally going to tell you if you comment on or share Covid-19 misinformation

Facebook is finally using one of its most potent tools to deal with Covid-19 misinformation - it is directly going to tell people if they have engaged with it.

After trying to deal with misinformation on many levels, Facebook is now finally deploying its most potent tool to battle misinformation on Covid-19. Facebook is now going to send notifications to anyone who has commented on, liked or shared any Covid-19 misinformation that has been taken down for violating the platform's terms of service, according to a report in the FastCompany.

Following this notification, users will be connected to trustworthy sources so as to get the right information.

Also Read: Google has a new trick to tackle Covid-19 misinformation

These proactive notifications that alert people about misinformation is Facebook's latest attempt to let people know what misinformation has been removed from the site. Facebook first launched this concept in April this year in the form of a newsfeed post that directed users who were engaging with misinformation to the landing page of the WHO (World Health Organisation) website which had debunked Covid-19 myths.

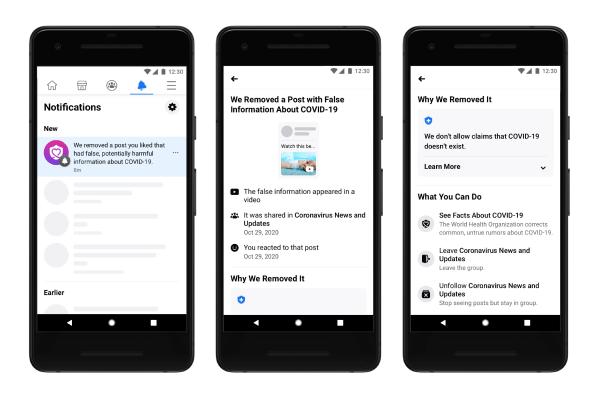

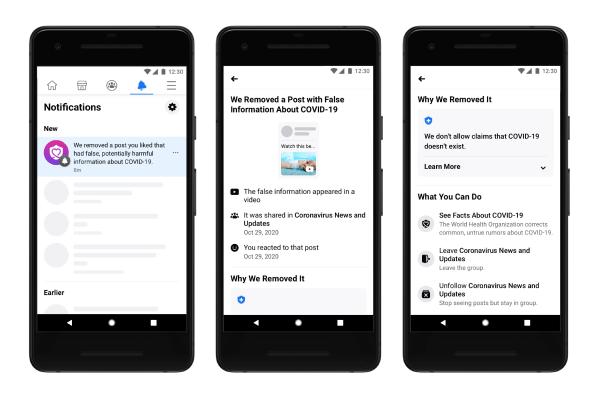

While this was considerably subtle, Facebook is now going to reach out with direct notifications that state - “We removed a post you liked that had false, potentially harmful information about Covid-19.”

If you click on the notification it will take you to a page where you can see a thumbnail of wrong content you might have liked or shared, a description of whether you liked it, shared it or commented on it and also a note about why it was removed from Facebook.

The page will also offer follow-up actions like the option to unsubscribe from the group that posted that content in the first place and also to “see facts” about Covid-19.

Facebook said that they found it wasn't clear to many users why posts on their newsfeed were urging them to seek facts about Covid-19. “People didn't really understand why they were seeing this message. There wasn't a clear link between what they were reading on Facebook from that message and the content they interacted with,” said Valerio Magliulo, a product manager at Facebook who worked on the new notification system.

Also Read: Facebook bans false claims about COVID-19 vaccines

Facebook has thus redesigned the experience so that the user understands exactly what information they have shared/liked/commented on is false.

The alert that Facebook is going to send is written to be informative and it is also nonjudgmental. Facebook isn't going to try and correct the record, instead, it is going to explain why a given post was removed from the platform.

“The challenge we were facing and the fine balance we're trying to strike is how do we provide enough information to give the user context about [the interaction] we're talking about without reexposing them to misinformation,” says Magliulo.

While researchers feel that this is a good way to make people aware and understand their news consumption habits better, they are of the opinion that it might be a bit too late to effectively control Covid-19 misinformation.

Catch all the Latest Tech News, Mobile News, Laptop News, Gaming news, Wearables News , How To News, also keep up with us on Whatsapp channel,Twitter, Facebook, Google News, and Instagram. For our latest videos, subscribe to our YouTube channel.