Twitter's reply prompts for offensive tweets improved to detect friendly banter

Twitter displays prompts for tweet replies that contain offensive or harmful words. It has improved the algorithms to better understand the nuance in different kinds of conversations.

Twitter started testing prompts last year to help people reconsider what they're tweeting if it contains “potentially harmful or offensive reply”. Twitter has made improvements to its prompts system, and is rolling it out to Android and iOS users who have English as the default language.

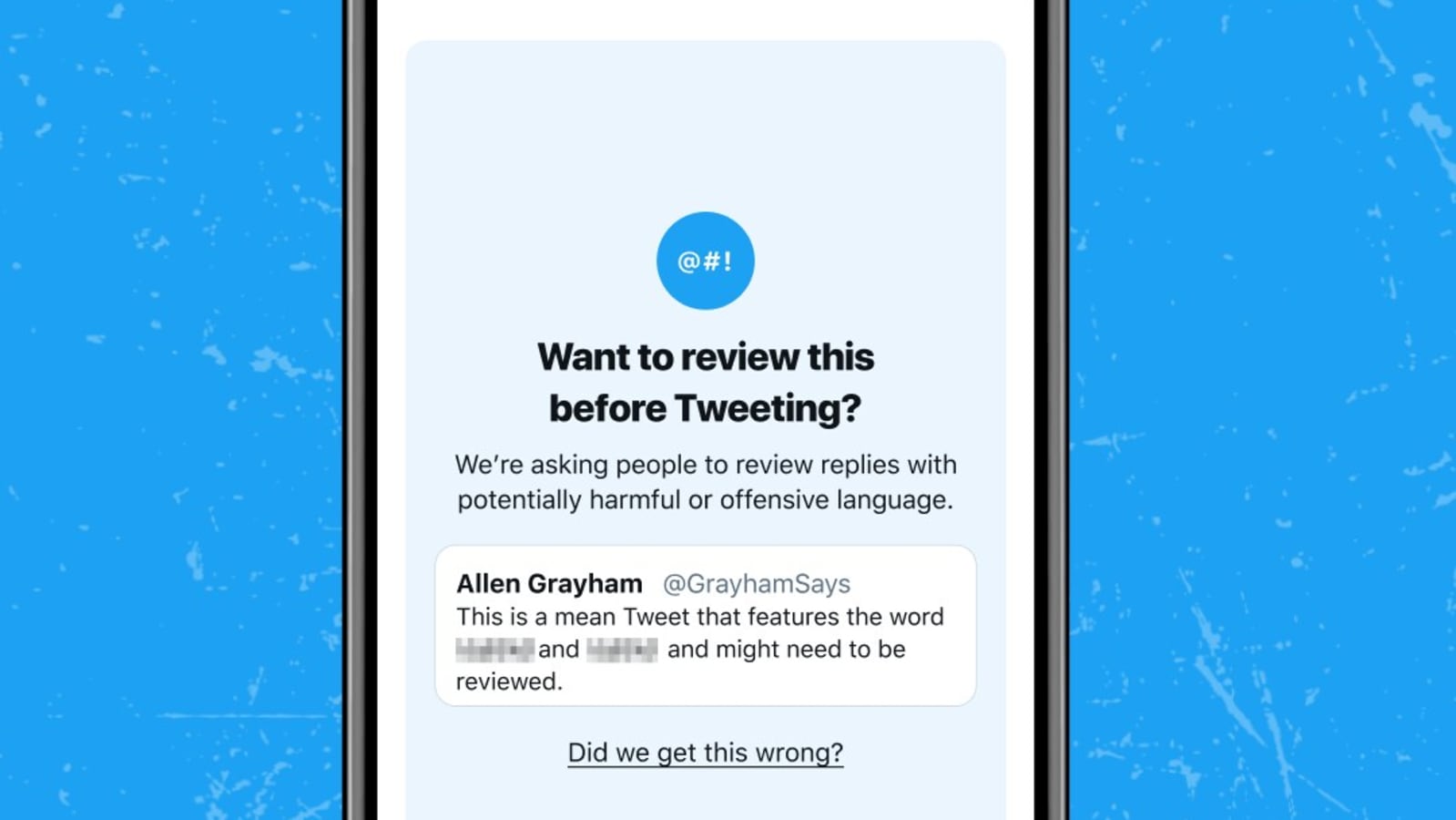

Twitter doesn't stop one from tweeting what they want but they show a prompt asking users to review what they've written by highlighting the words that may be harmful. Twitter showed these prompts for replies that contained insults, strong language or hateful remarks. During its tests, Twitter said it found that some of these prompts were unnecessary. The reason behind this was that “the algorithms powering the prompts struggled to capture the nuance in many conversations and often didn't differentiate between potentially offensive language, sarcasm, and friendly banter.”

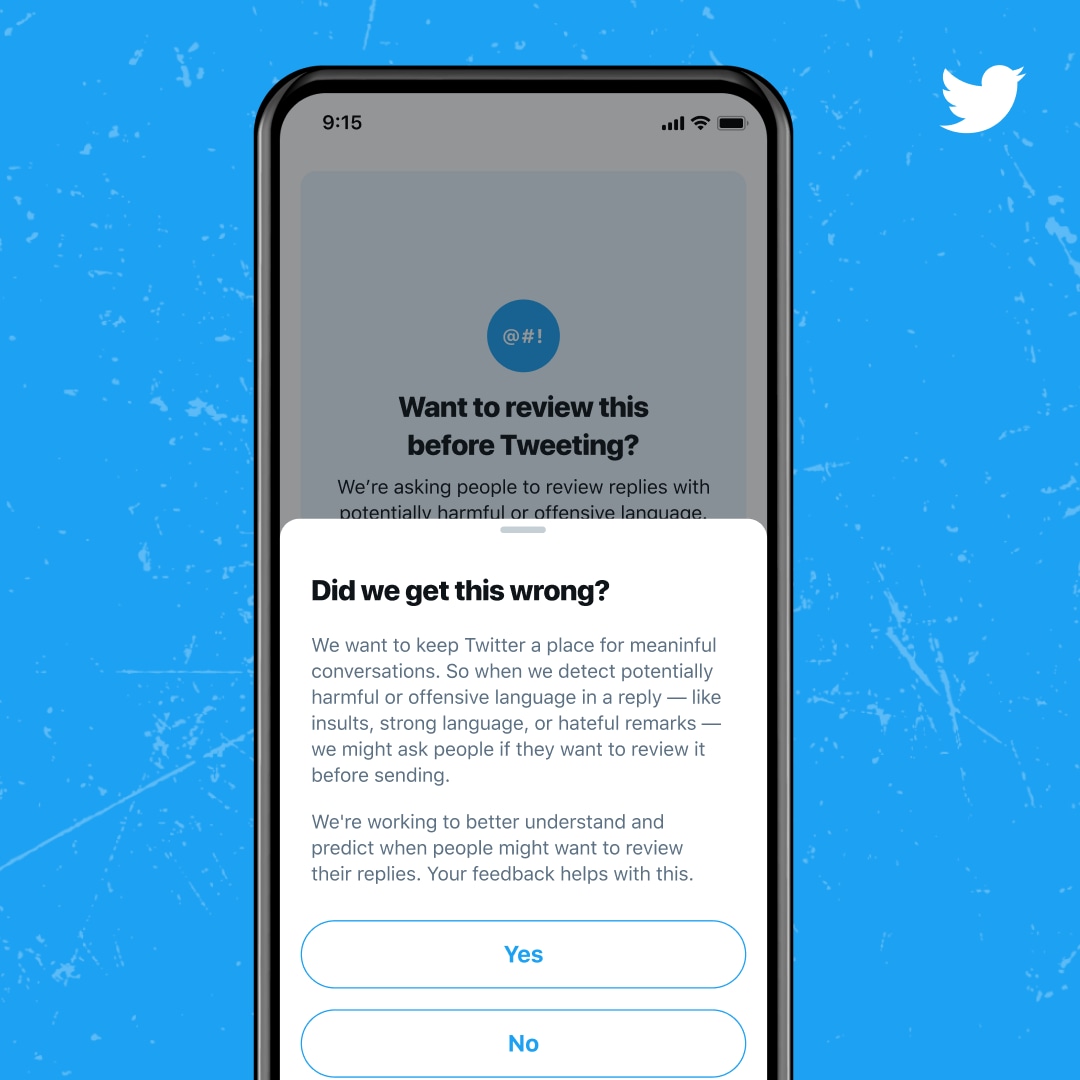

Twitter has now improved its algorithms to better understand conversations. Before sending a prompt for an offensive reply, Twitter will consider the relationship between the author and the replier, and also how often they interact. The algorithms have also been improved to understand situations where “language may be reclaimed by underrepresented communities and used in non-harmful ways.” At the same time it has also improved its systems to detect strong language more accurately. Users will also be asked if the prompts may have been wrong, and a choice to give Twitter feedback.

It's interesting to see how this feature works out in the future. In the tests conducted so far, Twitter said it did have some effect on people's tweeting behaviour. Twitter found that the prompts made around 34% people edit their reply or not send the reply at all. These prompts also resulted in 11% fewer offensive replies in the future. This also resulted in people being less likely to receive offensive and harmful replies back.

Catch all the Latest Tech News, Mobile News, Laptop News, Gaming news, Wearables News , How To News, also keep up with us on Whatsapp channel,Twitter, Facebook, Google News, and Instagram. For our latest videos, subscribe to our YouTube channel.