Google Search’s new AI tools can decipher through your terribly spelled questions

Google’s subtle ‘did you mean…” feature already has the words spelled right in case you want to check and learn.

_1602826676457_1602826688392.png)

Google announced a whole host of new improvements at the “Search On” event that will soon be rolling out to the search service over the next few weeks and months. The new additions and changes are primarily focused on using AI and machine learning techniques to provide better search results. The most important one among them all is a new spell checker tool that Google says will help decipher even the worst spelled questions.

Google's Head of Search, Prabhakar Raghavan said that about 15% of the search queries Google gets everyday are ones that the company has never seen before and thus they must constantly work to improve their results.

Also Read: Google adds ‘hum to search' feature for that song stuck in your head

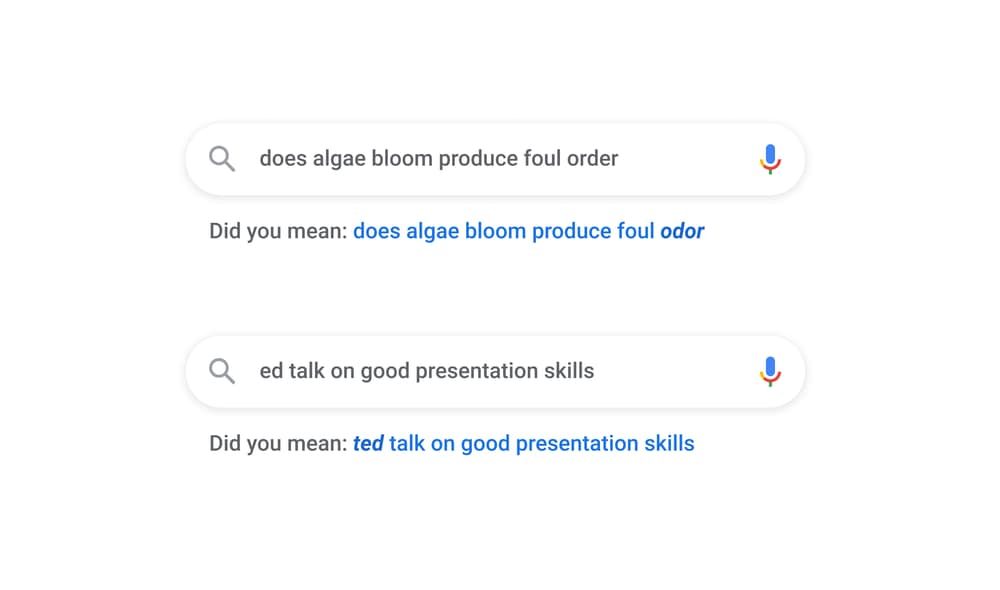

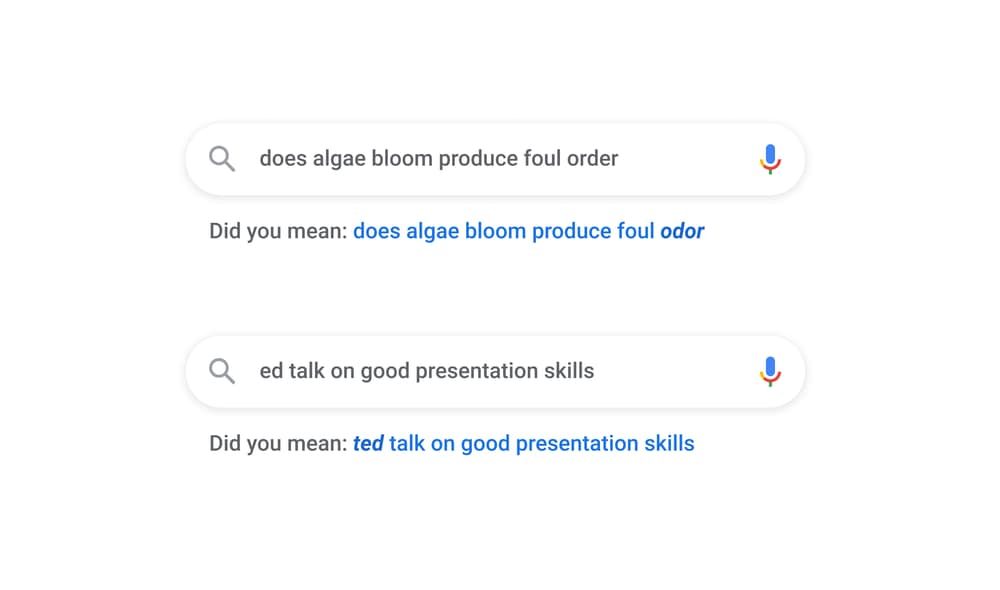

A big reason behind these never-seen-before queries is poor spellings. According to Cathy Edwards, VP Engineering at Google, one in every 10 search questions on Google are misspelled. Google has been trying to help this with its “did you mean” feature that suggests the proper spellings.

By the end of this month, Google will be releasing a massive update to that “did you mean” feature which will be using a new spelling algorithm that's powered by a neural net with 680 million parameters. It runs in “under three milliseconds after each search”, and Google promises it'll offer even better suggestions for misspelled words.

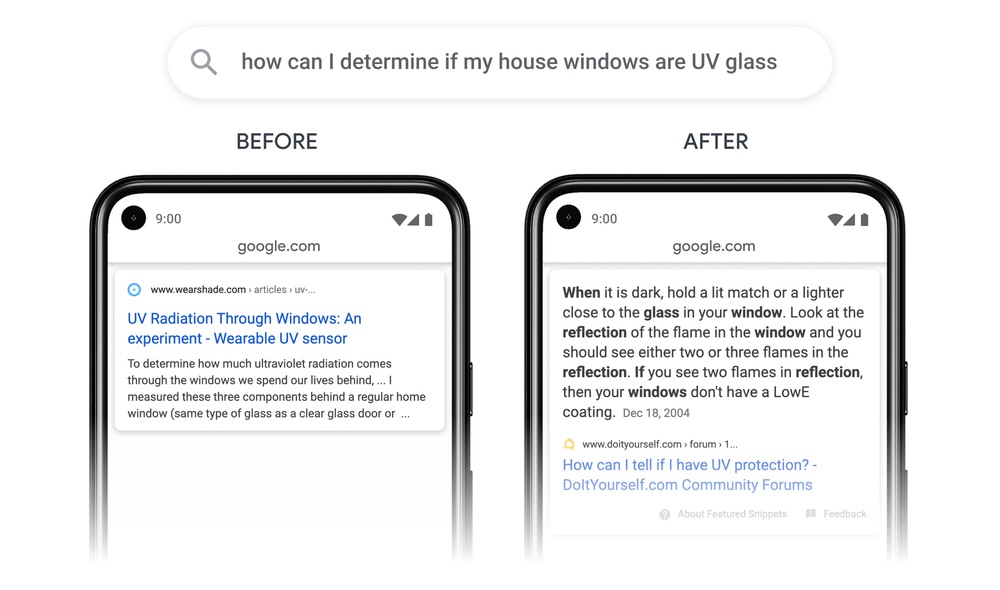

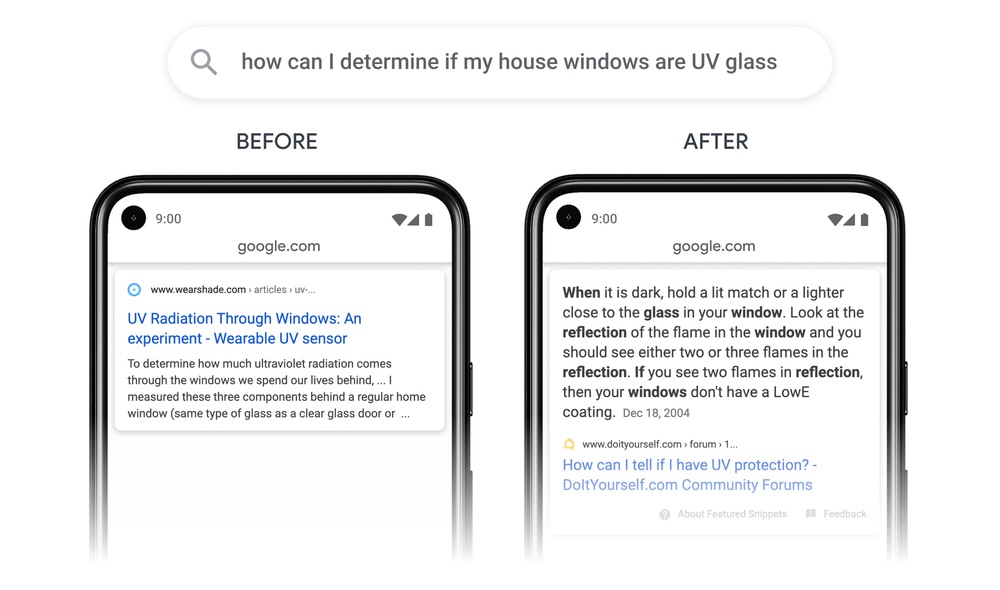

Another feature that's coming in is that Google search will now be able to index individual passages from webpages instead of the whole webpage. For example, if users are looking for - “how can I determine if my house windows are UV glass” - the new algorithm will be able to find a single paragraph on a DIY forum that has the answer. According to Edwards, when the algorithm starts to roll out next month, it'll improve 7% of queries across all languages.

Google is also using AI to divide broader searches into subtopics to help provide better results.

Google is also going to use computer vision and speech recognition to automatically tag and divide videos into parts - this is going to be an automated version of the existing chapter tools that already exist. For example, cooking videos, or sports videos can be parsed and automatically divided into chapters. This is similar to Google's existing work in surfacing specific podcast episodes in search, instead of just showing the general feed.

Catch all the Latest Tech News, Mobile News, Laptop News, Gaming news, Wearables News , How To News, also keep up with us on Whatsapp channel,Twitter, Facebook, Google News, and Instagram. For our latest videos, subscribe to our YouTube channel.