No fighting! Facebook’s AI moderator to alert group admins when users are causing trouble in comments

One of the new AI tools on Facebook that are being deployed to manage communities on the platform will warn group admins if users of the group are fighting in the comments.

Facebook has recently launched a whole suite of tools that will help group admins control their communities better. Some of these new tools offer a clearer overview of posts and members, some others have been designed to help admins tackle conflict in comments and the communities they head, like an AI-powered feature that can identify “contentious or unhealthy conversations” taking place in comments.

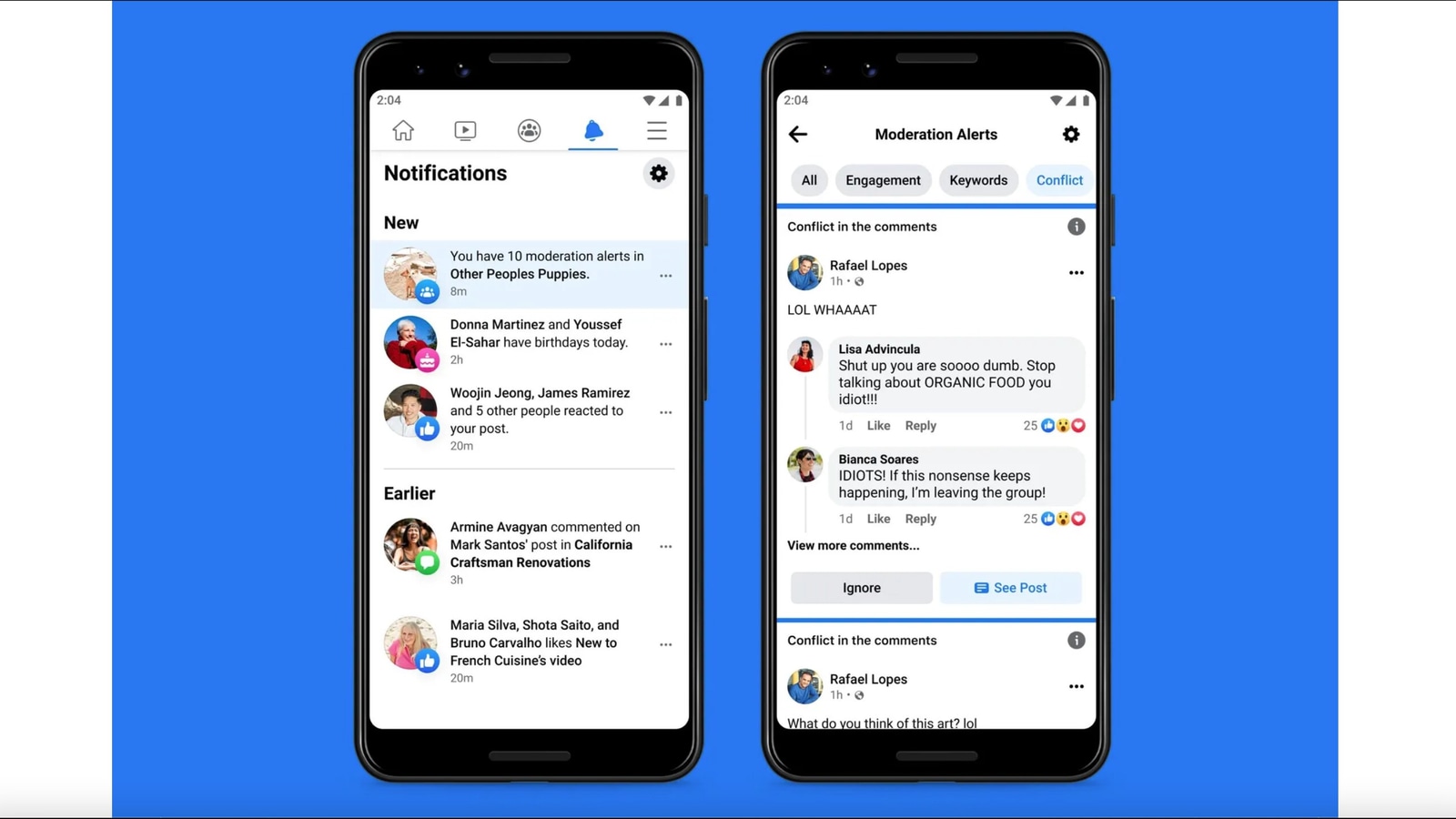

This particular tool is called Conflict Alerts and Facebook is currently just testing this. This essentially means that there is no knowing when it is going to be publicly available. These Conflict Alerts are similar to the existing Keyword Alerts feature which lets admin create “custom alerts” for times when commenters use certain words and phrases. This process is not manual though, it uses machine learning models to try and spot more subtle types of trouble.

Once the group admin has been alerted they can take action by deleting comments, they can boot users out of the group too if they think that is required. Admins can also limit how often the concerned individuals can comment and also control how often comments can be made on certain posts.

While in theory, this Conflict Alerts sounds good, there is no knowing how exactly this feature will detect “contentious or unhealthy conversations”. Facebook also has not clarified this when The Verge reached out for comments. The spokesperson explained that Facebook would use machine learning models to look at “multiple signals such as reply time and comment volume to determine if the engagement between users has or might lead to negative interactions”.

What can be assumed is that Conflict Alerts is going to use AI systems similar to what the platform already uses to flag abusive speech on the site. These machine learning models are “far from 100% reliable” and are often “fooled” by things like irony, slang, and humour. Nonetheless, the AI system should be able to pick up on more “obvious cues” that an argument is happening - like people calling each other idiots in the comments, as seen in the picture up top.

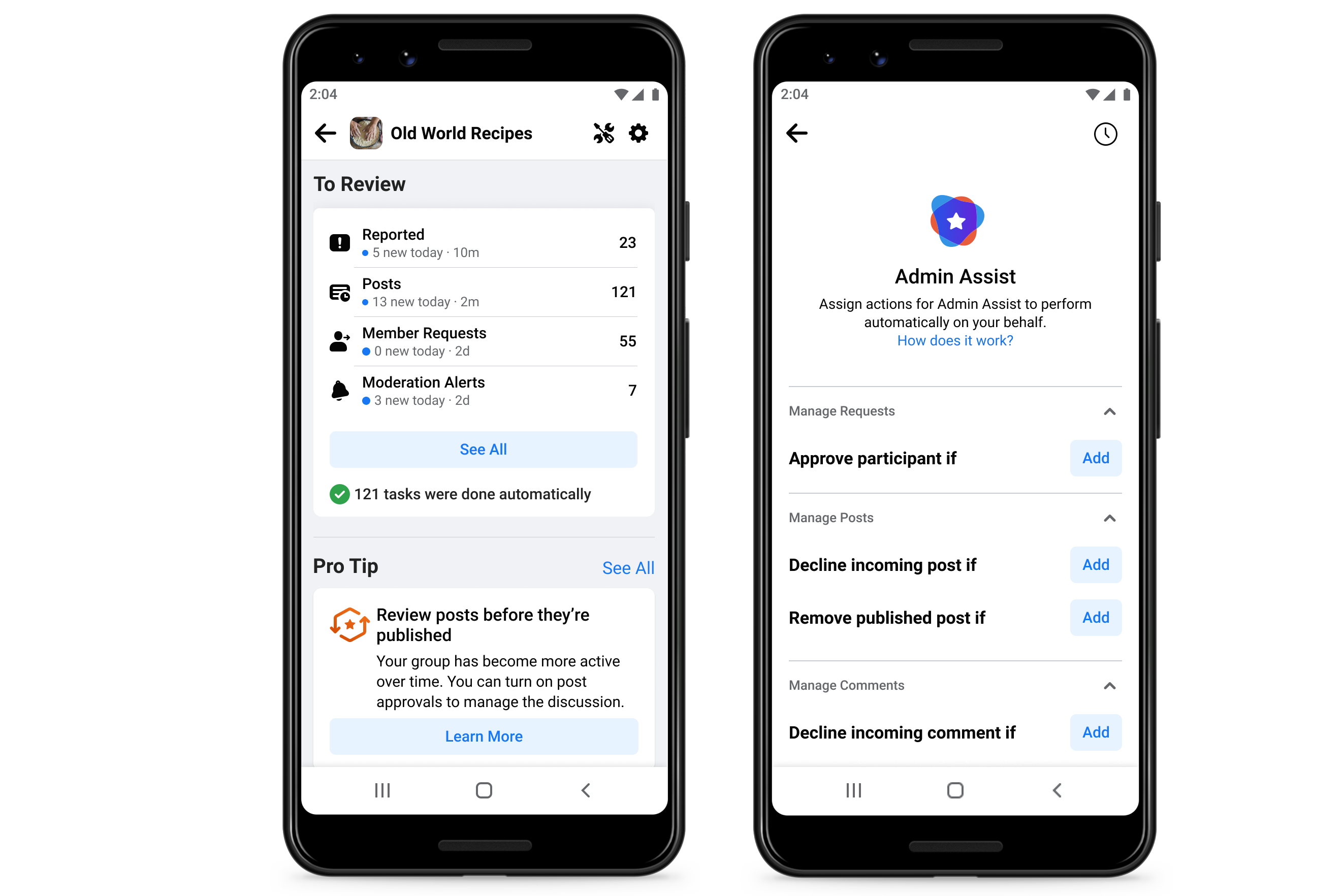

Other tools that are a part of this suite include a new admin homepage that is going to function as a dashboard and offer an overview of “posts, members, and reported comments”. There is also access to new group members' summaries which compile “each group member's activity in the group, such as the number of times they have posted and commented, or when they've had posts removed or been muted in the group”. Additionally, there is a new Admin Assist feature for automated content moderation that will let admins restrict who is allowed to post comments, block recently joined users, curb spam and unwanted promotions by banning certain links. The Conflict Alerts feature is going to be a part of this Admin Assist. You can read more about the new tools here.

Catch all the Latest Tech News, Mobile News, Laptop News, Gaming news, Wearables News , How To News, also keep up with us on Whatsapp channel,Twitter, Facebook, Google News, and Instagram. For our latest videos, subscribe to our YouTube channel.